Here, I present a novel implementation of well established techniques I am calling “Packed Geometry Maps”. By swizzling color channels and exploiting current compression techniques, packed geometry maps represent normal, height, and occlusion information in a single texture asset. This texture is fully backwards-compatible with standard normal maps in the Unity game engine, and can be automatically generated from traditional input texture maps through an extension of the Unity editor, reducing texture memory requirements by 2/3, and requiring no changes to developer workflow.

Normal Maps are great, but they could be better.

Normal maps are not a new invention. The initial concept was first published sometime around 1998 as a technique for storing high-frequency surface data to be re-mapped onto low resolution geometry. Since then, normal maps in some for or another have become ubiquitous in realtime computer graphics, where the geometric resolution of models may be limited.

The basic concept is to store surface normals (the direction a surface is “facing”), in an image. When you need to know this information, such as when calculating shading on an object, you simply look up the surface normal from the texture (and depending on your implementation, re-project it into a different basis). The end result is low resolution models being shaded as though they were higher detail, capturing smaller bumps and cracks in their surface which aren’t actually represented by geometry.

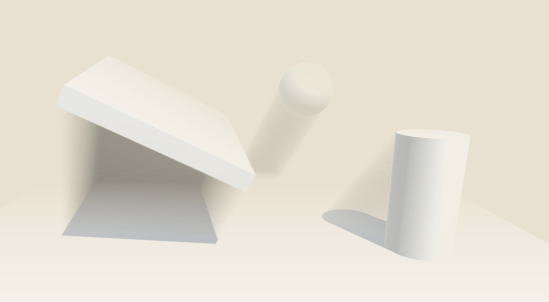

A comparison of a simple sphere without and with normal mapping. The underlying geometry is the same for both.

Normal maps are fantastic, but as computer hardware advances other techniques have become more frequent in addition to normal mapping. Runtime tessellation for example, produces additional geometric detail to better represent rough surfaces, and produce more accurate silhouettes, at the expense of additional texture data. Ambient occlusion maps are used to represent light absorption and scattering in complex geometry, and all of these new effects require new texture data as input. The normal map should not be discounted or ignored, but there’s definitely room for improvement here!

What’s In A Normal Map?

Surface normals are typically represented by colors, using the normal RGB format. The red channel of color is used to represent the “x” component of the normal vector (usually the coordinate along the surface tangent), green for “y” (bitangent), and blue for “z” (normal). These components all sum up to create a unit-length vector, used in lighting calculations, etc. which is stored in the final normal map texture.

You might have already noticed an optimization here. If the normal vector is known to be unit-length, then why are we storing three components? In most situations, the Z component of the surface normal in tangent-space is positive, and close to one. Using these assumptions, we can remove any ambiguity in solving for the Z component, and it can be reconstructed using only the X and Y components, which contain more critical signed data.

This allows us to completely remove one color channel from our normal map texture, while still preserving all the information required to properly shade an object!

In fact, the Unity game engine already does this! Unity uses a texture compression format called DXT5nm, a specific use-case of standard DXT5 compression. This confers many advantages in terms of memory usage by sacrificing precision and image quality. The DXT5 compression format is unique in that it preserves a fixed compression ratio, and the overall quality of the output image is dependent on the content of the image itself. I won’t get into details here, but images containing shades of a single color have a higher compressed quality than images with multiple colors. Unity disposes of the “Z” component of normal maps, and swizzles the X component into the alpha channel to take advantage of the inherent strengths of the format, and reduce the number of visible artifacts after compression.

What About Those Two Extra Channels?

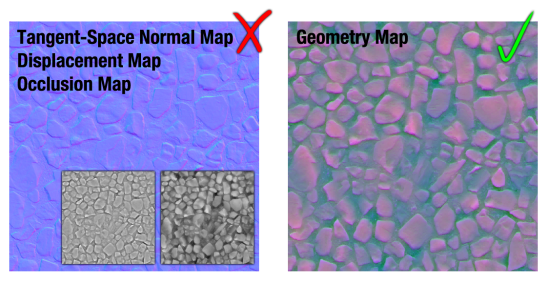

So, the Unity game engine simply writes zeroes into two of the color channels used by normal maps. Granted, this increases the quality of the texture due to the subtleties of DXT5 compression, but we could easily store two more low-frequency channels without a significant loss in quality. For this, I’ve opted to store ambient occlusion in red, and displacement in blue. By placing ambient occlusion (which tends to have the lowest contrast of all the input maps) in red, we can generally reduce the number of artifacts visible in the green channel of the surface normals, where they are most noticeable. Imprecision and compression artifacts in displacement and occlusion maps are also much less visible, due to the low-frequency nature of the types of data typically stored here.

The traditional normal, ambient occlusion, and displacement maps (left), compared to packed geometry maps (right). While geometry maps exhibit some artifacts, they’re not particularly noticeable on matte surfaces.

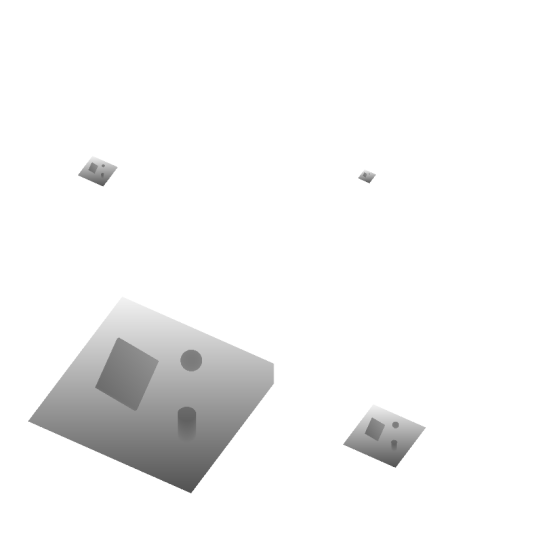

Worst-case example of Packed Geometry Maps applied to a mirror sphere.

This technique tends to work quite well. The majority of the texture artifacts produced are relatively subtle on mostly matte surfaces, however it still looks considerably worse on highly metallic and reflective surfaces, and therefore is mostly recommended for environment textures. On mostly non-metal surfaces such as dirt, grass, or wood, the differences between a packed geometry map and a traditional multi-texture setup are largely imperceptible, and given the constant compression ratio of DXT5, will require 1/3 the texture memory of the equivalent traditional input maps.

Won’t This Change Our Workflow?

As a matter of fact, no. Since packed geometry maps are essentially a combination of the color channels of various input textures, they can be produced automatically as part of the import pipeline of your game engine.

I’ve written a seamless extension to the Unity engine which will automatically build geometry maps for input textures as they are imported, updating them as source assets change, and saving them as unique standalone assets that can be incorporated in the final build.

By adding this extension to your project, geometry maps will be generated automatically as you import and update source texture maps. The utility is configurable and allows manual generation, as well as adding asset labels to generated geometry maps for easy searches. Certain directories can also be excluded if they contain textures your team does not want generating maps, and the file suffixes used to identify different texture types can be configured to best suit your naming scheme. It supports live reloading when source assets are changed, and is designed to be unobtrusive as possible.

There are a few minor issues left with the editor extension, so I’m not ready to release it just yet, but I’ll post it to Github in the near future!

Using these new Geometry Maps are just as simple! I’ve written a CG-Include file which defines a simple function for unpacking geometry maps, which should serve as a mostly drop-in replacement for Unity’s “UnpackNormal” function. Unlike “UnpackNormal” however, the “UnpackGeometryMap” function only requires a single texture sample for all three input maps, and returns a struct type for convenient access.

#include “GeometryMap.cginc”

// defines three values

// geo.normal

// geo.displacement

// geo.occlusion

GeometryMapSample geo = UnpackGeometryMap(tex2D(_GeoMap, IN.uv));

Including Geometry Map support in your new shaders is a snap and, should you choose, geometry maps are fully backwards-compatible with Unity’s built-in normal maps, and can simply be dropped in the “Normal Map” field of any standard shader.

In Summary

By utilizing unused texture color channels, it is possible to sacrifice some image quality for considerable savings on texture memory. A single texture map can be generated to produce backwards compatible geometry maps, representing normal, displacement, and occlusion information without considerable changes to artists’ workflow. This technique is particularly applicable to realtime applications designed to run on low-end hardware where texture memory is of significant concern, and is suitable for representing most non-metal surfaces.

In the future, I’ll look into other compression techniques that don’t degrade with additional color channels. Even if the compressed size of a single map is larger, there could still be potential for savings when compared to several DXT5 textures.